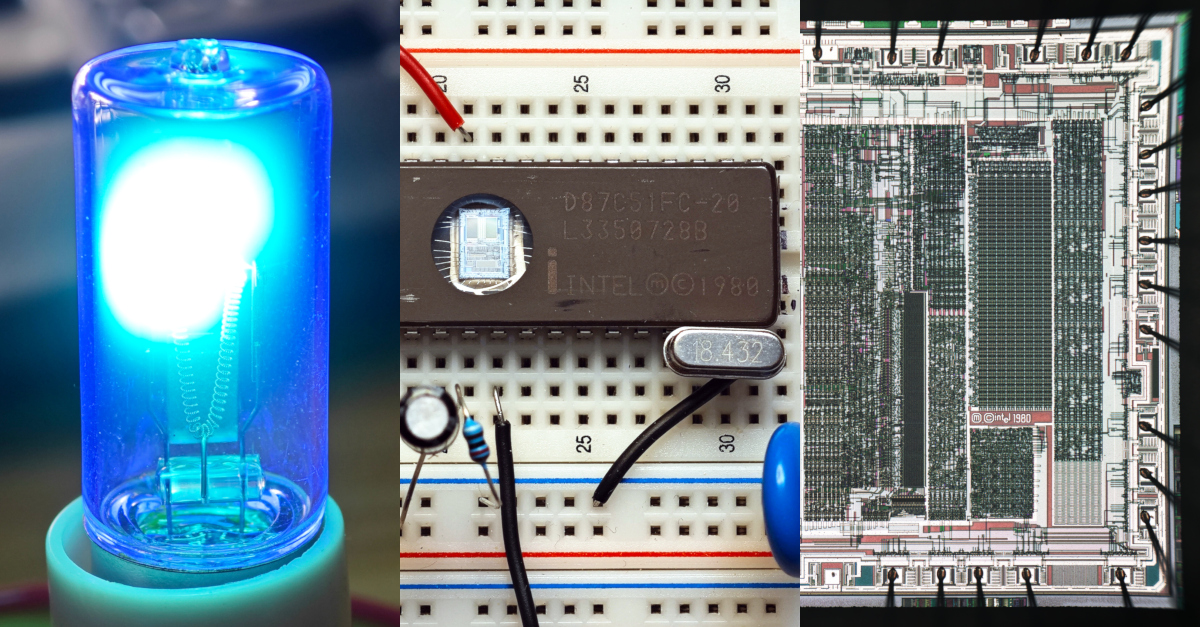

Programming Intel 87C51 - first high-volume integrated microcontroller (1980)

Today we are used to luxury of fully integrated microcontrollers - all key components are conveniently integrated into single reliable part: non-volatile memory, SRAM, CPU core, PLL, ADC/DAC, PWM, serial ports, e.t.c It was not like that in the past and embedded systems typically required lots of chips, until Intel 8048 (MCS-48) was released in 1976 on n-MOS technology. Intel expected that 8048 will have limited product lifetime, and in 4 years, in 1980 it was replaced by 8051 (MCS-51) which conquered the world. It was first high-volume product to integrate 4KiB of PROM, 128 bytes of SRAM, GPIO, serial port as well as 8-bit core in a single crystal. 87C51FC variant was using 32KiB EPROM non-volatile memory instead of PROM's, double SRAM size (256 byte), C-version was manufactured on CMOS process - which makes it exceptionally modern for the time. It was not particularly fast - simplest commands took 12 clock cycles to execute, so even at 20Mhz it was doing just over 1 million operations per second, also - no 16-bit division commands. Modern 8051-compatible cores are much faster and often do single-cycle command execution.Recently I got my hands on D87C51FC-20, and decided to experience the old ways of embedded software.

Sony F828 and infrared photography

Back in the early 2000's I was looking for a camera to replace my compact one. I was choosing between Sony F828 and DSLR's, especially Sony α100. Eventually I've decided on α100 (and don't regret it). Now in 2024 I've noticed Sony F828 on local marketplace, and also learned that it was an extremely unique camera: the only one having CCD sensor with RGBE Bayer matrix (I will need to measure spectral response of this unusual filter set), and able to switch to infrared mode using magnet hack (external magnet can remove IR-cut filter). This infrared (and ultraviolet!) capability is what I am interested in in 2024. This was the last Sony camera with this feature.Here - electric cooktop emits alot of infrared, black glass is transparent to infrared and shows it's internals:

Lichee Console 4A - RISC-V mini laptop : Review, benchmarks and early issues

I always liked small laptops and phones - but for some reason they fell out of favor of manufacturers ("bigger is more better"). Now if one wanted to get tiny laptop - one of the few opportunities would have been to fight for old Sony UMPC's on ebay which are somewhat expensive even today. Recently Raspberry Pi/CM4-based tiny laptops started to appear - especially clockwork products are neat, but they are not foldable like a laptop. When in summer of 2023 Sipeed announced Lichee Console 4A based on RISC-V SoC - I preordered it immediately and in early January I finally received it. Results of my testing, currently uncovered issues are below.

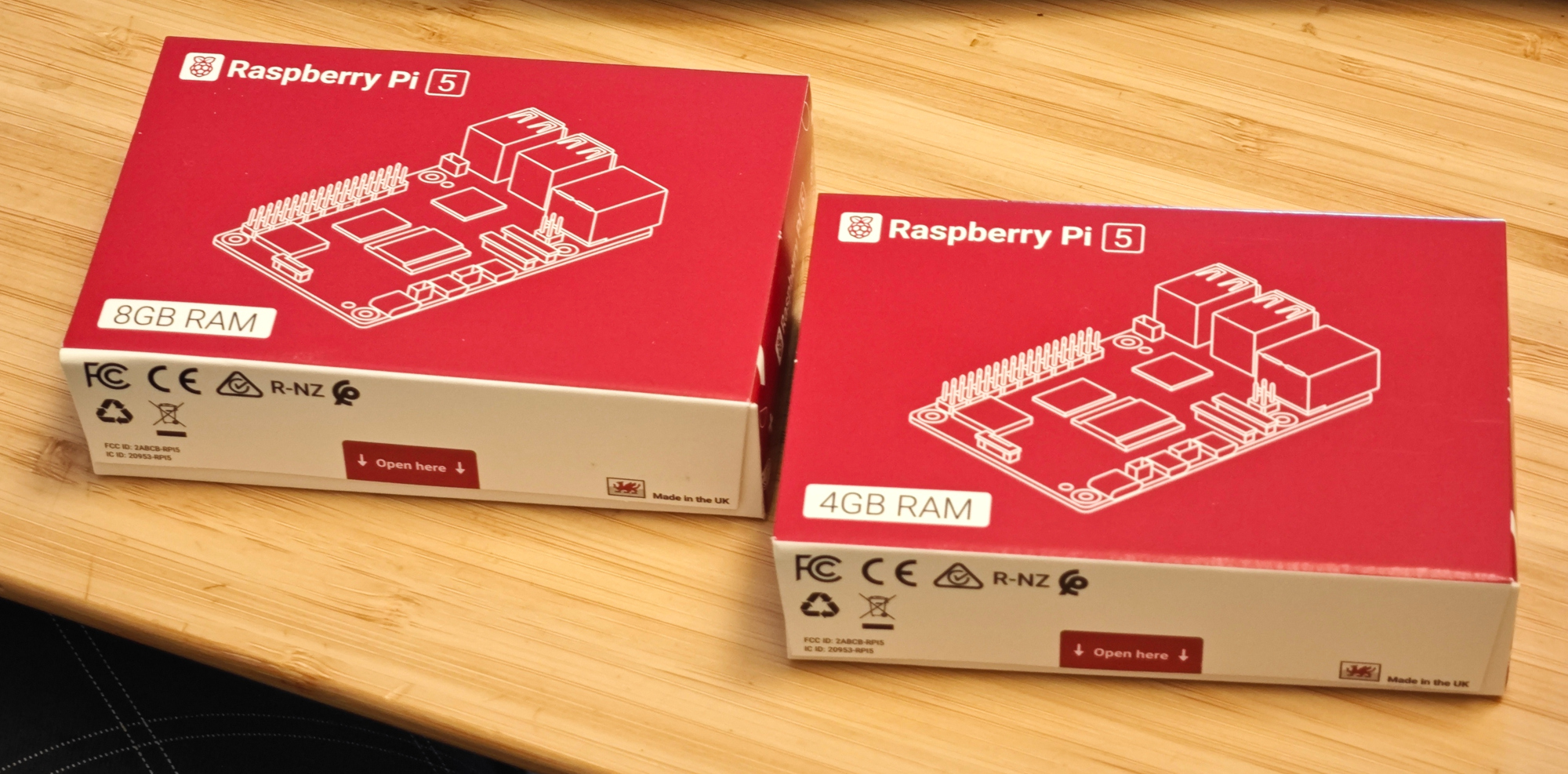

Ronald Reagan and Raspberry Pi

Some time late 80's Ronald Reagan told a joke:You know there’s a ten year delay in the Soviet Union of the delivery of an automobile, and only one out of seven families in the Soviet Union own automobiles. There’s a ten year wait. And you go through quite a process when you’re ready to buy, and then you put up the money in advance.

And this happened to a fella, and this is their story, that they tell, this joke, that this man, he laid down his money, and then the fella that was in charge, said to him, ‘Okay, come back in ten years and get your car.’ And he said, ‘Morning or afternoon?’ and the fella behind the counter said, ‘Well, ten years from now, what difference does it make?’ and he said, ‘Well, the plumber’s coming in the morning.'

On 28th of September preorders for Raspberry Pi 5 were opened. I did not preorder it immediately, but slept it over and placed my preorder at 6am the next day. I surely did pay 100% in advance. What I did not know at the time is that every ~6 hours was postponing delivery by ~1 month. So while first preorders were delivered in early November (unless you are a celebrity), mine was fulfilled only in early January. Still, it is better than what was happening with Raspberry Pi 4 at the peak of silicon shortage where one easily had to wait 6 months. These who really needed it surely could have paid scalpers 200% price (not sure why manufacturers hesitate to do it). Hopefully, queues for electronics will get shorter over time, not longer (although with current Taiwan situation there could be surprises).

Now, having 2 precious Pi's in my hands I can feel the privilege. The hype is partially justified, my coremark benchmarks confirm 2.2x performance boost at 1.5x power consumption and PCI-E is real. There is still quite a lot of room for further improvement until Raspberry Pi reaches 100W peak power consumption :-)

Finishing 10 minute task in 2 hours using ChatGPT

Many of us have heard stories where one was able to complete days worth of work in minutes using AI, even being outside of one's area of expertise. Indeed, often LLM's do (almost) miracles, but today I had a different experience.

Many of us have heard stories where one was able to complete days worth of work in minutes using AI, even being outside of one's area of expertise. Indeed, often LLM's do (almost) miracles, but today I had a different experience. The task was almost trivial: generate look-up table (LUT) for per-channel image contrast enhancement using some S-curve function, and apply it to an image. Let's not waste any time: just fire up ChatGPT (even v3.5 should do, it's just a formula), get Python code for generic S-curve (code conveniently already had visualization through matplotlib) and tune parameters until you like it before plugging it into image processing chain. ChatGPT generated code for logistic function, which is a common choice as it is among simplest, but it cannot change curve shape from contrast enhancement to reduction simply by changing shape parameter.

The issue with generated code though was that graph was showing that it is reducing contrast instead of increasing it. When I asked ChatGPT to correct this error - it apologized and produced more and more broken code. Simply manually changing shape parameter was not possible due to math limitation - formula is not generic enough. Well, it is not the end of the world, LLM's do have limits especially on narrow-field tasks, so it's not really news. But the story does not end here.

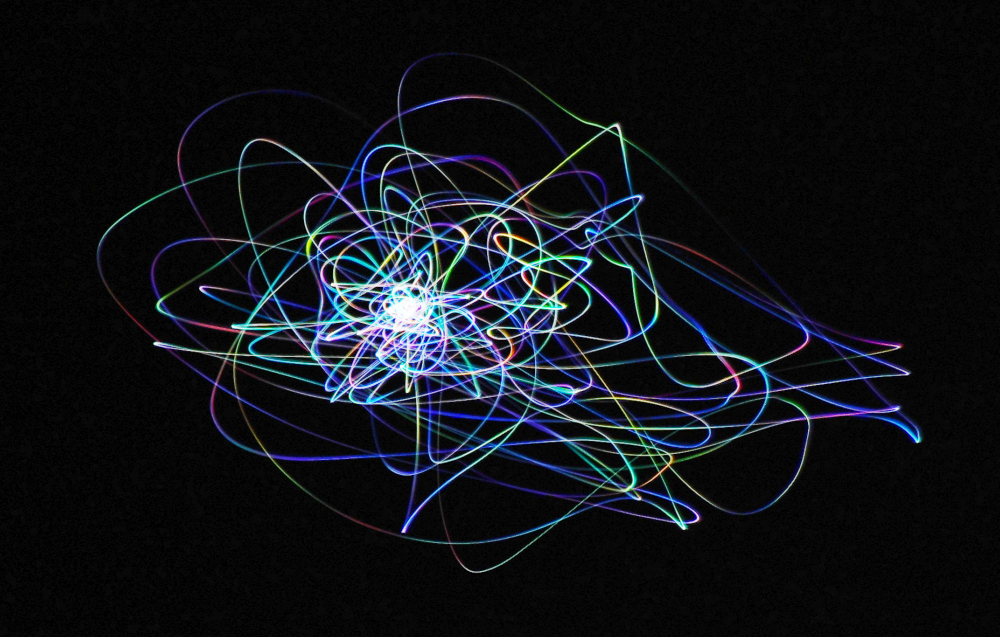

Sirius and color twinkling

Many had noticed that bright stars do twinkle, while planets do not. Recently, when looking at Sirius at low elevation I noticed it's not just brightness but also color twinkling. I took Sigma 50-500mm lens at F8 (62mm aperture), and did 4 second exposure while allowing camera to wobble so that variation of brightness and/or color would be recorded. Results really surprised me.Why it happens? Stars twinkle due to turbulence of the atmosphere acting as a random gradient refractive index "prism" (which is randomly shifting image & splitting colors - yes, even air has dispersion and it's visible here!) - so more/less light of different colors randomly hit lens aperture / eye. For stars air turbulence is sampled (in this case) in cylinder 62mm in diameter and ~50km in length, which makes effect very visible. Jupiter for example will average turbulence over a cone which opens up to 7.2m at 50km due to angular size of the planet, which will dramatically reduce contrast of twinkling due to averaging. Same averaging (reduction of twinkling) could happen for large telescopes (300mm+) even for stars, simply due to averaging across larger air volume.

EVE Online - it's getting crowded in space

Last few years we see more space games in the news - No Man's Sky, Starfield, long awaited Star Citizen and many more. In light of new competition it seems EVE Online also perked up and started to try to get old players back into the game. They were successful with me, so i dug up my old 13-year old account to look around (now it's easier - one don't need money any more to look around). Surely, in a decade game changed in many ways. Also, returning/new players should now get 1'000'000 SP on first login and that's the point of this post.

65B LLaMA on CPU

16 years ago dog ate my AI book. At the time (and way before that) common argument on «Why we still don't have AI working and it is always 10 years away» was that we can't make AI work even at 1% or 0.1% human speed, even on supercomputers of the time – therefore it's not about GFLOPS.

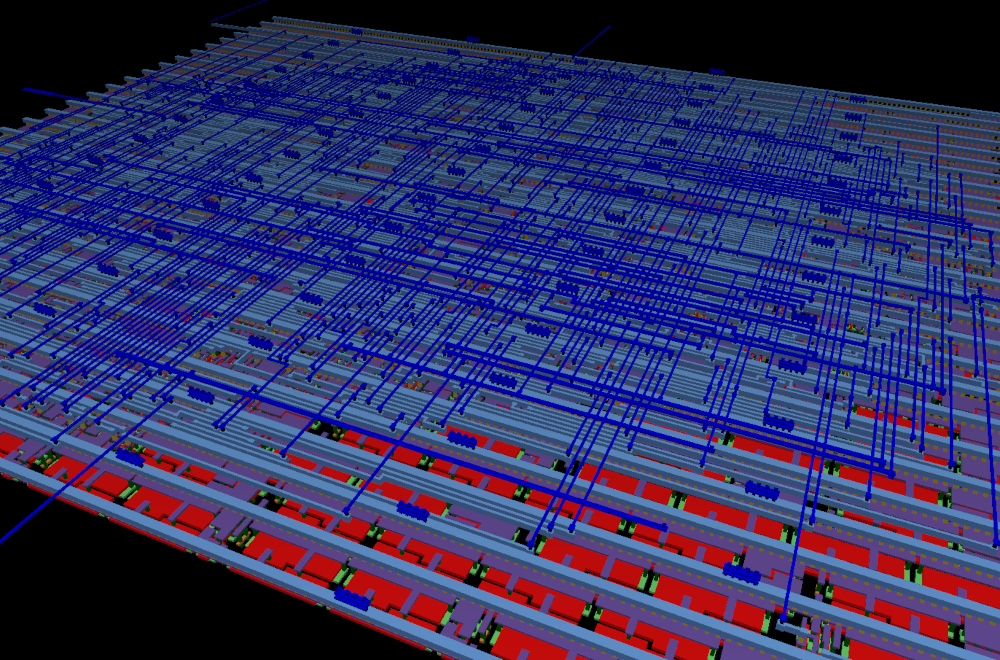

First tiny ASIC sent to manufacturing

5 years ago making microchip from high-level HDL with your own hands required around 300k$ worth of software licenses, process was slow and learning curve steep.

5 years ago making microchip from high-level HDL with your own hands required around 300k$ worth of software licenses, process was slow and learning curve steep.Yesterday I've submitted my first silicon for manufacturing and it was... different. In the evening wife comes as asks "How much time until deadline?". I reply: "2 hours left, but I still have to learn Verilog." (historically my digital designs were in VHDL or schematic).

All this became possible thanks to Google Skywater PDK and openlane synthesis flow - which allowed anyone to design a microchip with no paperwork to sign and licenses to buy. Then https://tinytapeout.com by Matt Venn lowered the barrier even further (idea to tapeout in ~4 hours, including learning curve).

This cake is a lie.

Stable Diffusion model that was publicly released this week is a huge step forward in making AI widely accessible.

Stable Diffusion model that was publicly released this week is a huge step forward in making AI widely accessible.Yes, DALL-E 2 and Midjourney are impressive, but they are a blackbox. You can play with it, but can't touch the brain.

Stable Diffusion not only can be run locally on relatively inexpensive hardware (i.e. sized perfectly for wide availability, not just bigger=better), it is also easy to modify (starting from tweaking guidance scale, pipeline and noise schedulers). Access to latent space is what I was dreaming about, and Andrej Karpathy's work on latent space interpolation https://gist.github.com/karpathy/00103b0037c5aaea32fe1da1af553355) is just the glimpse into many abilities some consider to be unnatural.

Model is perfect with food, good with humans/popular animals (which are apparently well represented in the training set), but more rare Llamas/Alpakas often give you anatomically incorrect results which are almost NSFW.

On RTX3080 fp16 model completes 50 inference iterations in 6 seconds, and barely fits into 10Gb of VRAM. Just out of curiosity I run it on CPU (5800X3D) - it took 8 minutes, which is probably too painful for anything practical.

One more reason to buy 4090... for work, I promise!

@BarsMonster

@BarsMonster